Cooking Up an AAC Correction Tool: A Recipe for Efficient Communication. Kinda.

Alright, gather ‘round, aspiring communication chefs! We’re about to embark on a culinary adventure – one where we cook up something genuinely useful for the world of Augmentative and Alternative Communication (AAC). This post dives into how we built tools to automatically correct sentences – specifically tackling tricky issues like typos and words mashed together without spaces. My goal here is to explain this in a way that makes sense, even if you’re not a tech wizard! I’ve gone with a cooking analogy. Now those of you know me know I love an analogy but also know that most of the time they are awful. This maybe one of them and I may take this a bit too far in this post..

What we did: We explored two main paths to auto-correction, like choosing between baking a cake from scratch or using a fancy pre-made mix:

- Fine-tuning a Language Model: We took a small but powerful AI model (called

t5-small) and taught it to correct noisy sentences. Think of this as perfecting a secret family recipe. - Developing Algorithmic Code: We also wrote specific computer code designed to fix spelling errors and add spaces where words were run together. This is more like mastering a basic, reliable cooking technique.

- (Slightly less exciting but..well spoiler alert: the fastest and “best” using an online Large language model)

This project was built some time ago, and while some data might be a bit outdated, the core ideas are still super relevant. You can find all the code at https://github.com/AceCentre/Correct-A-Sentence/tree/main/helper-scripts.

A quick note: If you’re looking for a current solution for an existing AAC system, Smartbox’s Grid3 “Fix Tool” is worth checking out. My work here was done before that tool existed, and it’s why you won’t see me rebuilding our model today!

Hold up. Before we continue if you do want to do this I recommend two things! 1. DONT RUN MY CODE but look at it by all means and be critical of it! 2. Let me know of improvements but 3. DON’T GET YOUR LLM TO MAKE THESE SCRIPTS FOR YOU. You’ll learn heaps more if you do it yourself. I say this with a recent history of geting LLM’s churning out code. Do the harder thing. Your brain will thank you.

The Core Ingredient: Why Automatic Correction Matters for AAC

AAC systems need to do a lot of things but ultimately for an end user they need to allow fast ways of getting your thoughts out from what you are trying to say - and understandable ones at at that. This isn’t just my hunch; it’s what we consistently hear from users, staff, and project feedback. The key term here is efficiency.

Sidenote: Efficiency in AAC isn’t just about the output. It could be speedier services, better quality support, or even device improvements like smarter input detection, but just to be clear for this post we are focusing on efficiency in output

The challenge often comes from “noisy” input – things like typing fast, or facing physical access challenges. Auto-correctionis our secret weapon for cleaning up this messy input and helping users get their message across more effectively.

Unlike prediction (where the system tries to guess what you’ll type next), auto-correction actively fixes mistakes afterthey’ve been made. Think about your phone – it’s constantly correcting your typos before you even see them. This happens seamlessly in the background, making it feel like your typing is perfect! (And you really notice it when it doesn’t work!) So, why isn’t this standard in AAC, where mistakes are equally common?

Approach 1: Building Algorithmic Correction Tools (Baking Our Own!)

When creating an AAC correction tool, it’s helpful to first understand the common “noisy” sentence types. These are the kinds of input issues we need to fix:

Ihaveaapaininmmydneck(No spaces and typos – a double whammy!)I wanaat a cuupoof te pls(Double key presses – sticky keys, anyone?)u want a oakey of cjeese please(Hitting nearby keys – positional errors)can u brus my air(Missing letters/deletions – vanishing letters!)Can you help me? Can you help me?(Repeated phrases – when a stored message goes wild!)

These sentences are typically short. While word prediction can help create perfect sentences, it often requires significant visual scanning and mental effort, which isn’t always ideal for AAC users.

Sidenote: We actually don’t know this for sure. There are some papers that document these types of errors but in short we don’t have a great grip on what is actually written by AAC users.. more on that later..

Tackling Words Without Spaces and Typos (Writingwitjoutspacesandtypos)

This is one of the trickiest problems. How do you separate words when they’re all jammed together?

Well turns out if you know some python code there is a clever library called wordsegment. This tool uses information about how common single words (“unigrams”) and two-word phrases (“bigrams”) are to figure out where words should be. Here’s an example:

from wordsegment import load, segment

load()

segment('thisisatest')

# Output: \['this', 'is', 'a', 'test'\]

It’s very effective! However, language is deeply personal. If wordsegment doesn’t know a user’s unique slang or abbreviations (like “biccie” for biscuit), it will struggle. For example, Iwouldlikeabicciewithmycuppatea might become ['i', 'would', 'like', 'a', 'bi', 'ccie', 'with', 'my', 'cuppa', 'tea'].

Truth is though, even with slight imperfections, the meaning is often still clear, especially if the user is communicating with a familiar partner. Sometimes, “good enough” is perfectly acceptable! In AAC, “co-construction” – where communication partners work together to understand – is super important. It happens all the time and you need to imagine any AAC system doesn’t work without it. We don’t always need to achieve perfect output.

Adding Our Secret Family Ingredients (Personalizing Algorithmic Tools with User Data!)

Imagine if we could incorporate a person’s actual vocabulary and common phrases into wordsegment’s data. This would significantly improve its accuracy for that specific user. We could take a user’s real-life language and use it to enhance the tool’s understanding, like using your grandma’s secret spice blend!

The wordsegment library allows for this kind of customization. When we did this work we even created specific “typo-heavy” two-word phrases based on real typing errors to make it smarter. This is a solid starting point, though capturing every personal nuance is challenging.

Another option is a “fuzzy” search approach (see this blogpost for some ideas on this). This method is light on memory and doesn’t require powerful computer graphics cards (GPUs), making it suitable for running directly on a device. While it might not be lightning-fast, it could be “quick enough” for many situations. However, it still faces challenges with acronyms, names, and highly unusual abbreviations.

Approach 2: Customizing Large Language Models (LLMs) (The Fancy Pre-Made Sauce)

What about the powerful Large Language Models (LLMs) like OpenAI’s GPT-3.5 Turbo? Let’s try wrapping our noisy sentences in an OpenAI GPT-3.5 Turbo API and see what happens:

The results are pretty nice. You can immediately see the benefit: users can focus on typing without being distracted by predictions. This could dramatically improve communication speed.

However, a key consideration with online LLMs is privacy. When communication data is sent to a cloud service, it raises questions about how that data is handled and to be fair some users - and providers of AAC are nervous of this. While some services offer opt-out options for data being used for model training, the notion of personal communication data residing in the cloud is something to address carefully.

It’s fair to say that whether “sending data to the cloud” is “unacceptable” is a nuanced point. Many people routinely use cloud services for sensitive personal information (like email, documents, or medical records), and some are increasingly comfortable sharing personal data with online AIs (is that a word now?). While some AAC users will undoubtedly prefer to keep all their communication entirely offline, others may not find it unacceptable, especially if there are clear privacy controls in place.

The primary privacy considerations often boil down to:

- User Comfort and Perception: Users might be uncomfortable with the technology if they perceive the privacy risk to be greater than it actually is, or if they don’t trust the AAC providers to have robust privacy controls.

- Provider Controls: The risk of poor privacy or security policies by an individual AAC provider, which could lead to chat data being logged, stored, or mishandled in the cloud.

- Security Measures: The risk of security breaches providing unauthorized access to chat data flowing through APIs.

These risks can and should be mitigated through robust security measures and strong privacy controls, such as not logging or storing chats unnecessarily, and avoiding associating them with user-specific IDs in API calls. If an AAC provider implements these correctly, the benefits of advanced online LLM capabilities might outweigh the concerns for many users, even if it doesn’t allay everyone’s apprehension. But either way - it would be darned easier to explain to end users if data never left the device.

Therefore, for AAC, we need a solution that is:

- Efficient: Quick to cook! Gets to you what meant accuratley and quickly

- Privacy-focused: All in your kitchen, no external diners!

- AAC-text savvy: Understands our unique culinary style!

Training Our Own Language Model for Correction (The Holy Grail: Baking Our Own Language Model!)

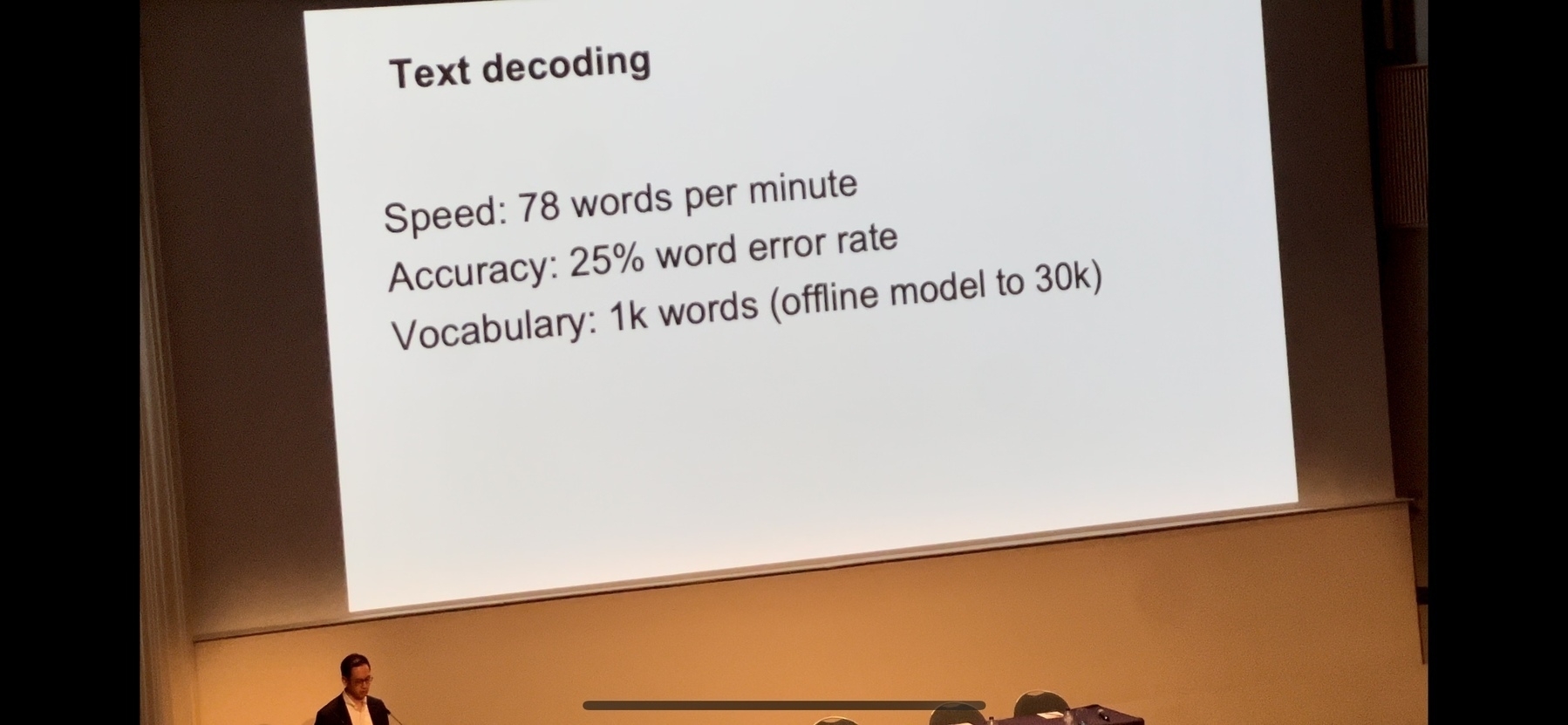

Spoiler alert - This is what we want to make - note this video is all running on device

Building our own language model from scratch sounds daunting, but it’s more achievable than you might think! The fundamental ingredients are:

- Data: A large collection of “noisy” sentences (like actual user input) alongside their perfectly “cleaned” versions. The more this data resembles real AAC communication, the better the model will perform.

- Even More Data: Seriously, data is the most crucial ingredient.

- Code: The “recipe” for teaching the model.

- Computing Resources: Some time and a computer (preferably with a graphics card).

The biggest challenge is DATA. There’s very little publicly available “AAC user dialogue” data. What is “AAC-like text”? It’s a surprising gap in our industry’s research – we don’t have large datasets showing how people actually use AAC. Are stored phrases used all the time? What kind of unique language do users create? We’re often just guessing.

So, we have to “imagine” what AAC-like data looks like. Researchers like Keith Vertanen have made fantastic attempts with artificial datasets. We also believe “spoken” language datasets might be a good starting point, as AAC aims to enable “speaking” through a device. We looked at corpora like:

The problem? These are mostly perfectly transcribed texts, lacking the glorious typos and grammatical quirks our correction tool needs to learn from. We needed truly “noisy” input.

Injecting Noise: Making Our Data Deliberately Messy

To make our pristine datasets messy, we artificially injected errors using tools like nlpaug. While this creates synthetic noise, we also sought out real typos from sources like the TOEFL Spell dataset (essays by English language learners). For grammar correction, we also looked at datasets like JFLEG Data and subsets of C4-200M which contain grammatically incorrect sentences with their corrected versions.

Our Grand Baking Plan: Data Preparation:

- Take each spoken text corpus.

- Inject typos into the sentences and strip out common grammar elements (like commas and apostrophes, which AAC users often omit).

- Create three versions of each sentence for training:

- With typos.

- With typos and compressed (no spaces).

- The correct, clean version (but still without spaces, to train for de-compression).

- Also, incorporate a grammar baseline layer using the grammar datasets.

We then used a “text-to-text transformer” model (specifically, the t5-small model) to learn from this data. This type of model is designed to take one text input and transform it into another, making it perfect for correction tasks. We chose t5-small because it’s lightweight and efficient enough to run on a device.

You can find the script for preparing the data here (you’ll need to download the BNC2014 corpus separately). The training process took several hours on a decent GPU. The resulting model is available on Hugging Face.

The Taste Test: How Did Our Bake Perform?

Let’s look at how our different correction techniques performed on a set of 39 test sentences (which contained both compression and typos):

- Algorithmic Approach (

Wordsegment+ Spelling Engine): Took about 14 seconds to process the test sentences. This approach is surprisingly quick for what it does, and it has no memory issues. However, its major limitation is that it will fail if it encounters words not in its dictionary, or novel grammatical structures. - Online LLM (Azure/OpenAI GPT Turbo 16K): Took an astonishing 13 seconds! This speed, even with an external network call, is remarkable.

Now, for our custom-trained models:

| Method | Accuracy (%) | Total Time (seconds) | Average Similarity |

|---|---|---|---|

| Inbuilt | 0.0 | 17.32 | 0.93 |

| GPT | 55.56 | 13.29 | 0.92 |

| Happy | 28.95 | N/A | N/A |

| Happy Base | 13.16 | N/A | N/A |

| Happy T5 Small | 0.0 | N/A | N/A |

| Happy C4 Small | 0.0 | 46.90 | 0.81 |

| Happy Will Small | 28.95 | N/A | N/A |

| HappyWill | N/A | 24.67 | 0.93 |

Here are some example results comparing the incorrect sentence to the correct version and outputs from various models:

| Incorrect Sentence | Correct Sentence | Output-Inbuilt | Output-GPT | Output-Happy | Output-HappyBase | Output-HappyT5 | Output-HappyC4Small | Output-HappyWill |

|---|---|---|---|---|---|---|---|---|

Feelingburntoutaftettodayhelp! |

Feeling burnt out after today, help! |

feeling burnt out aft et today help |

Feeling burnt out after today, help! |

Feeling burnt out today help! |

Feelingburntoutaftettodayhelp! |

Feelingburntoutaftettodayhelp! |

Feelingburntoutaftettoday help!! |

Feeling burnt out today help! |

Guesswhosingleagain! |

Guess who's single again! |

guess who single again |

Guess who’ssingle again! |

Guess who single again! |

Guesswhosingle again! |

Grammatik: Guesswhosingleagain! |

Guesswhosingleagain!! |

Guess who single again! |

Youwontyoubelievewhatjusthappened! |

You won't you believe what just happened! |

you wont you believe what just happened |

You won't believe what just happened! |

You want you believe what just happened! |

You wouldn'tbelieve what just happened! |

Youwontyoubelievewhatjusthappened! |

Youwontyoubelievewhatjust happened!! |

You want you believe what just happened! |

Moviemarathonatmyplacethisweekend? |

Movie marathon at my place this weekend? |

movie marathon at my place this weekend |

Movie marathon at my place this weekend? |

Movie Marathon at my place this weekend? |

Movie marathon at my place this weekend? |

grammar Moviemarathonatmyplacethisweekend? |

Moviemarathonatmyplacethis weekend? |

Movie Marathon at my place this weekend? |

Needstudymotivationanyideas? |

Need study motivation, any ideas? |

need study motivation any ideas |

Need study motivation. Any ideas? |

Need study motivation any ideas! |

Need study motivationanyideas? |

Needstudymotivationanyideas? |

Needstudymotivationanyideas? |

Need study motivation any ideas! |

Sostressedaboutthispresentation! |

So stressed about this presentation! |

so stressed about this presentation |

So stressed about this presentation! |

So stressed about this presentation! |

So stressed about this presentation! |

Sostressedaboutthispresentation! |

Sostressedaboutthispresentation!! |

So stressed about this presentation! |

Finallyfinishedthatbookyourecommended! |

Finally finished that book you recommended! |

finally finished that book you recommended |

Finally finished that book you recommended! |

Finally finished that book you're recommended! |

Finally finished that book yourecommended! |

Finalfinishedthatbookyourecommended! |

Finally finished that bookyourecommended!! |

Finally finished that book you're recommended! |

Our custom-trained model (HappyWill) actually outperformed GPT in terms of average similarity! While its total time was longer because we ran it on a small cloud machine, it would be much faster on a local device – and, crucially, it keeps all data private.

However, it’s important to note the “inbuilt” algorithmic technique (the Wordsegment one). While its “accuracy” (meaning, how often it perfectly matched the exact expected output) was low for our specific test, its output was surprisingly readable, and it has no privacy or significant memory concerns.

You can try the inbuilt algorithmic correction yourself for a short while via our API:

curl -X 'POST' \

'https://correctasentence.acecentre.net/correction/correct_sentence' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"text": "howareyou",

"correct_typos": true,

"correction_method": "inbuilt"

}'

Or access the API directly in a browser: https://correctasentence.acecentre.net (just remember to set "correction_method": "inbuilt").

So, the next crucial step in our culinary journey? We need to hunt down, or create, an even richer, noisier, and more authentic corpus of data. The quest for the perfect “ingredients” continues!

(Oh dear.. This cooking analogy really went too far… I do apologise)

Key Takeaways

From this project, we’ve identified a few critical points for developing communication tools:

- Understanding Language Models for Correction (How to Broadly Make a Language Model for Correction): We’ve shown how a custom, fine-tuned language model (like

t5-small) can be developed to correct complex errors while keeping user data private and enabling on-device processing. It’s about tailoring the perfect sauce to your needs. - Algorithmic Approaches Can Be Effective (Algorithmic Approaches May Be As Good In Some Circumstances): For specific problems, like adding spaces or basic spelling, simpler algorithmic approaches can be surprisingly readable and efficient, offering a viable alternative to complex AI models, especially when novel input isn’t a primary concern. This highlights the importance of matching the tool to the specific type of communication challenge – sometimes, a good old stew is just what you need!

- The Need to Understand User Corpora Better (We Need to Understand Better Users Own Corpora): Regardless of the approach, the effectiveness of any correction tool is heavily dependent on the training data. There’s a significant need for more authentic, “noisy” datasets reflecting how AAC users actually communicate. Truly understanding users’ unique vocabulary and communication patterns – their personal “flavor profiles” – is essential for building the most effective tools.